“Baldi” the Virtual Tutor Helps Hearing-Impaired Children to Learn Speech

|

Information technology (IT) research has created a 3D computerized tutor that helps profoundly deaf children to develop their conversational skills. “Baldi” the animated instructor converses via the latest technologies for speech recognition and generation, showing students how to understand and produce spoken language. The conversational agent for language training was developed through a three-year, $1.8 million National Science Foundation (NSF) grant. Baldi could transform the way language is taught to hearing-impaired children. In addition to helping students accurately produce expressive speech, the interactive systems curriculum-development software lets teachers and students customize classwork. Students can review classroom and homework lessons to improve vocabulary, reading and spelling, in addition to speech. The project is led by Ron Cole at the University of Colorado, Boulder. Grades 6-12 at the Tucker-Maxon Oral School in Portland, Oregon are the first to use Baldi in the pilot study. Also contributing to the research are the Oregon Graduate Institute’s Center for Spoken Language Understanding, the Perceptual Science Laboratory at the University of California, Santa Cruz (UCSC) and the University of Edinburgh, Scotland. The tongue model used in Baldi is based on data collected by researchers at Johns Hopkins University in Baltimore. Based on NSF-supported work by UCSC psychology professor Dominic Massaro, Baldis 3D animation (including articulated mouth, teeth and tongue) produces accurate facial movements that are synchronized to its audible speech, which can be either a recorded human voice or computer-generated sounds. As a virtual being, Baldi is tireless, allowing students to work at a comfortable pace in studying the ways that subtle facial movements produce desired sounds. |

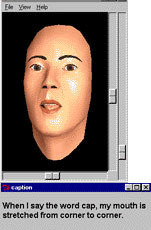

These pictures show the 3D animated conversational agent — named “Baldi” — that acts as a virtual tutor for hearing-impaired children at the Tucker-Maxon Oral School as part of Ron Cole’s NSF-funded project. For more details, see http://cslu.cse.ogi.edu/tm. The project will be featured on ABC-TV’s Prime Time Thursday, March 8, 2001 at 10:00 p.m. EDT. |

|

According to Cole, the project began “with a vision in the mid-1990s to develop free software for spoken language systems and their underlying technologies. We want to give researchers the means to improve and share language tools that enhance learning and increase access to information.” At the Tucker-Maxon school, Baldi is used by profoundly deaf children whose hearing is enhanced through amplification or electrical stimulation of the cochlea. Teachers and students alike participate in designing the project software and applications, and that involvement provides real-time feedback to the researchers. The teachers use a toolkit-available via the web at no cost to researchers and educators-with graphical authoring software that lets them design their own multimedia courseware. “The students report that working with Baldi is one of their favorite activities,” Cole said. “The teachers and speech therapist report that both learning and language skills are improving dramatically. Activities in the classroom are more efficient, since students can work simultaneously on different computers, with each receiving individualized instruction, while the teacher observes and interacts with selected students.” This project is the first to integrate emerging language technologies to create an animated conversational agent, and to apply this agent to learning and language training, Cole said. Baldi is state-of-the-art in its integration of speech recognition, speech synthesis and facial animation technologies. The graphical authoring tools are likewise cutting-edge examples of rapid prototyping for development of conversational agents. To create Baldis speech recognition capabilities, the researchers compiled a database of speech from more than 1,000 children. Those samples then shaped an algorithm for recognizing fine details in the childrens speech. Also, the animated speech produced by Baldi from textual input is accurate enough to be intelligible to users who read lips. Results from this project can be incorporated into animated conversational agents for non-hearing impaired applications such as learning new languages (e.g., English as a Second Language). They may also be useful for diagnosing or treating speech and reading disorders. Last fall, Cole received a five-year, $4-million award from NSFs Information Technology Research initiative. The new project will develop interactive books and virtual tutors for children with reading disabilities. These successors to Baldi will use the latest technologies by which computers can interpret facial expressions, integrating feedback from audible and visible speech cues. |

|

Please note this project will be featured on the ABC-TV news magazine PrimeTime

Thursday at 10:00 p.m. EDT on March 8, 2001. For more information, see http://www.abcnews.go.com/sections/primetime/

For examples of Baldi, see: http://cslu.cse.ogi.edu/tm

For more about the Tucker-Maxon Oral School, see: http://www.oraldeafed.org/schools/tmos/